(Source: Tesla Autopilot 2016, Youtube)

Following the first fatal accident involving a self-driving vehicle, the debate has been renewed over whether autonomous cars are ready to share the road with traditional vehicles.

The accident, which occurred in Florida on May 7th, resulted from a Tesla Model S colliding with a tractor-trailer that had made a left turn in front of the vehicle. The Tesla was in self-driving mode at the time of the accident, and the software failed to recognize the tractor-trailer and apply the breaks in time.

A formal investigation is being conducted, and Tesla declined to say whether the technology or driver could have prevented the accident. In a news release following the fatal crash, Tesla said the accident resulted when, “Neither autopilot nor the driver noticed the white side of the tractor-trailer against a brightly lit sky, so the brake was not applied.”

Regardless of the investigation’s outcome, the accident highlighted many of the questions still plaguing self-driving cars.

Yesterday, The New York Times published an opinion piece suggesting that self-driving cars should be held to the same standards as new drivers. The article, “A 16-year-old needs a license. Shouldn’t a self-driving car?” makes some solid points about the lack of federal regulation, current limitations of the technology, and the need to address potential safety concerns.

As we’ve written before, Federal guidelines need to be established for the testing and usage of self-driving vehicles. As it stands, only eight states (and Washington D.C.) have basic legislation regulating the driving and testing of autonomous cars. This is not sufficient.

The opinion piece suggests holding self-driving technology to at least the same standards a new driver would be subjected to before receiving a license. While it’s impossible to argue with the idea that a self-driving car should be at least as proficient as a 16-year-old, the reality is that self-driving cars will and should be held to a much higher standard.

According to the CDC, 2,163 U.S. teens between the ages of 16 and 19 were killed from auto accidents in 2013. Another 243,243 were treated in emergency departments for injuries suffered in motor vehicle crashes. That means roughly six teens (ages 16–19) die every day from motor vehicle injuries. If autonomous cars can’t offer a significant improvement on those grim statistics there’s no way manufacturers are going to convince an already skeptical public to get behind the (self-driving) wheel.

The NYT article proposes, “We should establish a graduated license system similar to that in place for human drivers, in which the license for a class of self-driving vehicle is limited to the situations that it can safely negotiate. A full license would be granted only when the vehicles pass an unrestricted test.”

While this is a nice idea on paper, the reality is that defining what constitutes “a situation that [self-driving vehicles] can safely negotiate” is much easier said than done.

Is a 99.9% success rate considered acceptable? Would a 0.1% failure rate mean self-driving cars should never be on the road?

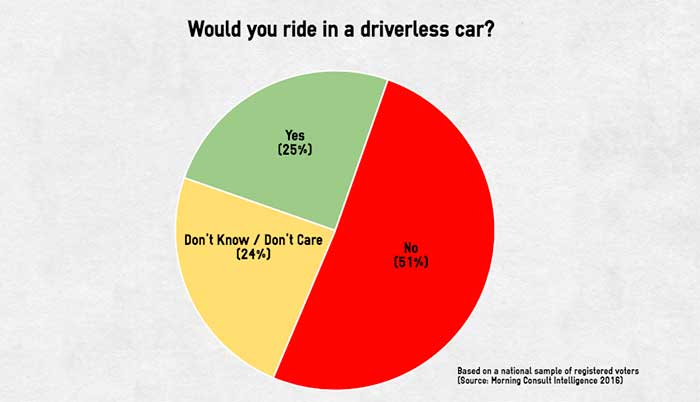

Due to reasonable fears regarding safety, it’s a short jump to comparing self-driving technology against unreasonable standards. The idea that the technology needs to be perfect is a false dilemma. In reality, any significant improvement over the currently flawed system should be welcomed with open arms.

The article is correct in stating that there’s still significant room for improvement. And the suggestion of restricting the technology’s use in situations that are more likely to result in accidents has merit. However, it would be a shame to let a tragic incident involving a self-driving car overshadow the fact that our roads are already riddled with accidents.

If it were an issue of taking and passing a standard driving test, self-driving cars would likely outperform 16-year-olds by a wide margin. That’s a ridiculously low bar to set.

Inversely, expecting absolute perfection is a surefire way to delay technology that could save countless lives. Let’s not allow an inflated sense of our own driving abilities to blind us from the fact that technology can help. Accidents will continue to happen, and we should learn from each one. But pretending that sharing the road with self-driving cars is somehow scarier than sharing them with other drivers is irrational and counterproductive.